In most companies, who reviews AI generated content in companies depends on the team structure and industry. Content editors, marketing managers, legal teams, compliance officers, and subject matter experts share this responsibility. Each reviewer checks AI output for a specific purpose editors focus on tone and readability, legal teams check for compliance risks, and subject matter experts verify factual accuracy before any content goes live.

Larger organizations often have a dedicated AI governance team or content operations function that owns the full review workflow. Smaller businesses typically assign one content lead to handle all review stages. Regardless of company size, publishing AI generated content without human review is a risk no business should take. It affects brand credibility, search performance, and in regulated industries, legal standing.

What Does AI Content Review Mean in a Business Context

AI content review is the process of a human checking, editing, and approving content that was created or assisted by an AI tool before it is published or distributed. It is not just proofreading. It involves fact checking, brand voice alignment, legal review, and sometimes a full rewrite.

As AI writing tools like ChatGPT, Gemini, and Claude become standard in content workflows, businesses need clear systems to ensure the output meets their quality bar. Without human oversight, AI content can include factual errors, generic messaging, or even legally problematic claims. To keep output reliable, many teams now also rely on dedicated AI content evaluator tools for quality, accuracy, and compliance as part of their standard workflow.

AI Generated Content in Companies

Different people within a company may be involved in reviewing AI content, depending on the content type and the industry. Here are the most common roles involved:

1. Content Editors and Senior Writers

These are usually the first line of review. They check grammar, readability, tone of voice, and structural flow. They also ensure the content matches the brand guidelines and does not sound robotic or off-brand.

In many content teams, the editor makes the final call on whether the AI draft is usable or needs a complete rework. Their job is not to fix AI errors. Their job is to ensure readers get value from the content.

2. Marketing Managers

Marketing managers review AI content for strategic alignment. They check whether the messaging supports campaign goals, whether the calls to action are correct, and whether the content fits within the broader content calendar.

They often approve final drafts before the content goes to the publishing stage. In some organizations, they are the last approver after the editor and legal team have already reviewed.

3. Legal and Compliance Teams

This is especially important in regulated industries like finance, healthcare, insurance, and legal services. Legal teams review AI content to ensure no claims are made that could create liability, violate regulations, or mislead consumers.

AI tools can sometimes generate content that sounds authoritative but contains unverified or false claims. Legal teams catch these issues before publication to protect the company.

4. Subject Matter Experts

When AI content covers technical topics such as medicine, engineering, finance, or law, subject matter experts are brought in to verify accuracy. SMEs read the content for factual correctness and depth, not just language quality.

For example, a pharmaceutical company might have a medical reviewer check all health content. A fintech firm might have a certified financial analyst review investment-related articles. This type of specialized human review closely mirrors what professional key responsibilities in a search engine evaluator job across platforms like Google and Bing.

5. SEO Specialists

SEO reviewers check AI generated content for keyword relevance, search intent alignment, meta data quality, and internal linking opportunities. They make sure the content will actually perform in search and not just sound good on paper.

6. AI Governance or Content Operations Teams

Larger companies are now building dedicated AI governance functions. These teams set the rules around how AI can be used, what tools are approved, what data can be fed into AI systems, and how the review process should work across departments.

Roles involved in AI content review and their focus areas

| Role | What They Review | Industries Most Common In |

|---|---|---|

| Content Editor | Tone, grammar, readability, brand voice | All industries |

| Marketing Manager | Campaign alignment, CTA accuracy, strategy fit | All industries |

| Legal Team | Claims, disclaimers, regulatory language | Finance, Healthcare, Legal, Insurance |

| Subject Matter Expert | Technical accuracy, depth, factual correctness | Healthcare, Engineering, Tech, Finance |

| SEO Specialist | Keywords, search intent, meta data, links | Marketing, E-commerce, Publishing |

| AI Governance Team | Policy compliance, tool usage, data handling | Enterprise, Finance, Government |

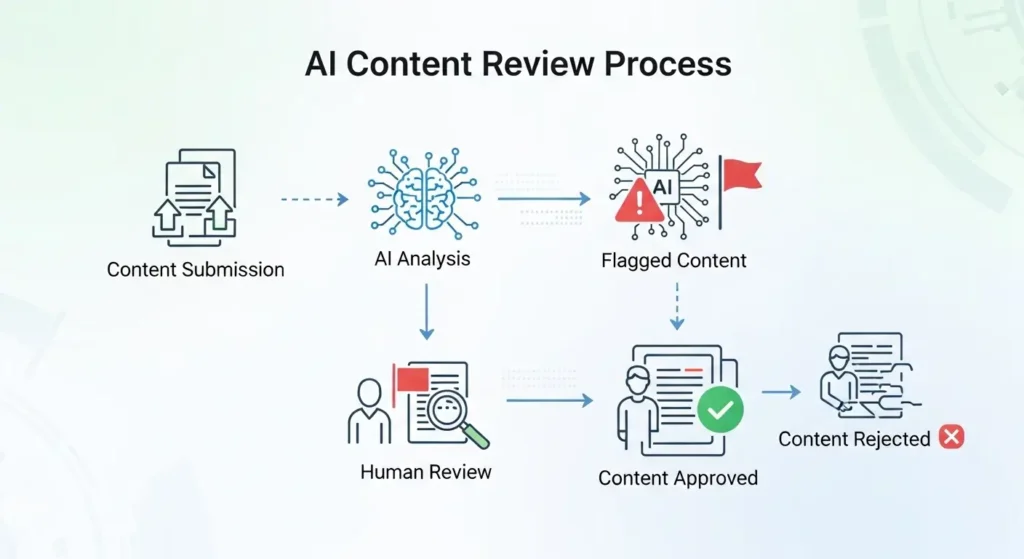

How the AI Content Review Process Works

The review process for AI generated content usually follows a multi stage workflow. Here is how it typically works in practice:

- Stage 1: AI Draft Generation A writer or content strategist uses an AI tool to generate an initial draft based on a prompt or brief.

- Stage 2: Initial Human Edit An editor reads the draft, corrects errors, adjusts tone, and ensures the structure makes sense.

- Stage 3: Fact-Checking Claims, statistics, and references are verified manually or with a fact-checking tool.

- Stage 4: Legal or Compliance Review Relevant content is passed to legal or compliance teams, especially in regulated industries.

- Stage 5: SEO and Technical Review The SEO team checks keyword usage, meta descriptions, and search optimization elements.

- Stage 6: Final Approval A manager or head of content gives final sign-off before publication.

Real Examples of Who Reviews AI Content in Different Company Types

Startup or Small Business

In a startup with a small team, one person usually handles most of this. A content marketer or founder might use AI to draft content, then spend time editing it personally before publishing. There is rarely a formal review chain.

Mid Size Company

A mid-size company usually has a content team of two to five people. AI drafts move through an editor, then a marketing manager. Legal review happens only for sensitive content like financial advice or health claims.

Large Enterprise

Large companies often have a formal content operations team, an AI policy in place, and multiple review stages. Different departments may have their own review processes, and an AI governance team oversees compliance across all teams. Many global enterprises also partner with specialist evaluation firms. If you want to see which types of organizations are actively building these human review capabilities, the list of top companies hiring for digital evaluation work gives a clear picture of how seriously this is now being treated at scale.

Review complexity based on company size

| Company Size | Who Typically Reviews | Review Complexity |

|---|---|---|

| Small (1-20 employees) | Founder or single content person | Low, informal |

| Mid-size (20-250) | Editor + marketing manager | Medium, semi-structured |

| Enterprise (250+) | Full review chain with legal, SME, SEO, AI governance | High, structured and documented |

Common Mistakes Companies Make When Reviewing AI Content

- Skipping fact checking entirely AI tools can confidently state wrong information. Not verifying facts is a major risk, especially for regulated industries.

- Only checking grammar and not intent An AI draft can be grammatically perfect but miss the point of what the audience actually needs.

- Letting AI content publish without any human review This is the most dangerous mistake. No matter how good the AI tool, human review is non negotiable for brand trust and legal safety.

- Not having a defined review process When no one knows who is responsible for reviewing what, things fall through the cracks.

- Assuming AI content is always original AI can sometimes reproduce content that is similar to existing published material. A plagiarism check should be part of the workflow.

- Not updating review guidelines as AI tools evolve AI capabilities change quickly. Review standards that worked six months ago may not cover new risks today.

Best Practices for Reviewing AI Generated Content in Your Organization

- Assign clear ownership for each type of content so everyone knows their role in the review chain.

- Create a review checklist that covers tone, accuracy, legal language, SEO, and brand alignment.

- Use AI detection tools as a supplement, not a replacement, for human review.

- Establish a style guide that all reviewers and AI prompts follow to ensure consistency.

- Run periodic audits on published AI content to catch quality issues over time.

- Document all review decisions so you can track issues and improve your process.

- Train your review team on how AI tools work so they know what to watch out for.

- Set up separate review workflows for different content types such as blog posts, legal notices, social media, and product copy.

AI content review checklist by area

| Review Area | What to Check |

|---|---|

| Accuracy | Verify all statistics, dates, names, and factual claims against credible sources |

| Brand Voice | Check tone, language style, and alignment with your style guide |

| Legal Compliance | Flag any unsubstantiated claims, missing disclaimers, or regulated language |

| SEO Quality | Confirm keyword use, meta data, headings, and internal links |

| Originality | Run a plagiarism check to ensure the content is not too similar to existing material |

| Audience Fit | Confirm the content meets the needs and knowledge level of the intended reader |

Conclusion

Understanding who reviews AI generated content in companies is no longer a nice to have question. It is a core part of responsible content operations. From small startups to global enterprises, every organization using AI tools needs a clear answer to this question backed by a real workflow.

The companies that get this right will produce content that is accurate, trustworthy, legally safe, and genuinely useful to their audiences. The ones that skip review will eventually pay the price through damaged reputation, regulatory penalties, or poor content performance.

KEY TAKEAWAYS

- Content editors, marketing managers, legal teams, SEO specialists, and SMEs all play a role in reviewing AI content

- The review process should cover accuracy, brand voice, legal compliance, SEO quality, and originality

- Company size directly affects how formal and multi-layered the review process needs to be

- Skipping fact-checking or publishing without any human review are the most dangerous mistakes

- Large enterprises increasingly rely on dedicated AI governance teams to manage content oversight at scale

- A documented review checklist and clear role assignment are the foundation of a reliable AI content workflow

FAQs

1.Do companies always need a human to review AI content?

Yes. Human review is essential. AI tools can produce content that sounds convincing but contains factual errors, lacks brand personality, or creates legal exposure. No responsible company should publish AI content without at least one layer of human review.

2.How many people are typically involved in reviewing AI generated content?

This varies by company size. In small businesses, one or two people review all content. In large enterprises, the review chain can involve four to six roles including editors, legal teams, SMEs, and SEO specialists.

3.What tools do content reviewers use when checking AI content?

Reviewers commonly use grammar tools like Grammarly, plagiarism checkers like Copyscape, AI detection tools like Originality.ai, and fact-checking platforms. SEO reviewers often use tools like Ahrefs, Semrush, or Surfer SEO.

4.Who is legally responsible if AI content causes harm or spreads false information?

The company that published the content is responsible, not the AI tool provider. This is why legal review before publication is so important, especially in regulated industries where content can directly affect consumer decisions or safety.

5.Should AI content review be the same as regular editorial review?

Not entirely. AI content review should include all standard editorial checks plus additional layers for factual verification, originality, and AI-specific issues like hallucinated sources, over-confident claims, and generic phrasing that does not reflect real brand expertise.