AI answers validation before deployment is the process of testing, evaluating, and verifying that an artificial intelligence system produces accurate, safe, and reliable responses before it is made available to real users. This process involves multiple layers of checks, including automated benchmarking, human review, red teaming, and adversarial testing, all aimed at ensuring that the AI behaves as intended across a wide range of inputs.

Without rigorous validation, AI models risk producing harmful, biased, or factually incorrect answers at scale. Leading AI companies run validation pipelines that combine rule-based filters, model-based scoring, expert human evaluators, and real world simulation before any public release. Understanding how this process works helps businesses, developers, and end users make informed decisions about which AI systems to trust.

What Is AI Answers Validation Before Deployment

AI answers validation refers to a structured quality assurance process applied to large language models (LLMs) and other AI systems before they go live. Think of it as the final inspection on a production line, except instead of checking physical parts, engineers are checking for reasoning errors, hallucinations, policy violations, and bias.

This process is not a single step. It is a layered pipeline that begins during model training and continues through post-deployment monitoring. Each layer catches different categories of failure, and together they form the safety net between raw model output and what users actually see.

Why It Matters

AI systems learn from massive datasets. That means they can pick up incorrect information, outdated facts, or problematic patterns. Validation is what stands between a capable but imperfect model and real world harm or embarrassment for the deploying organization.

Even after a model passes internal tests, its answers can still mislead users in subtle ways. That is precisely ai answers human verification as an ongoing layer of oversight, not just a one-time checkpoint before release.

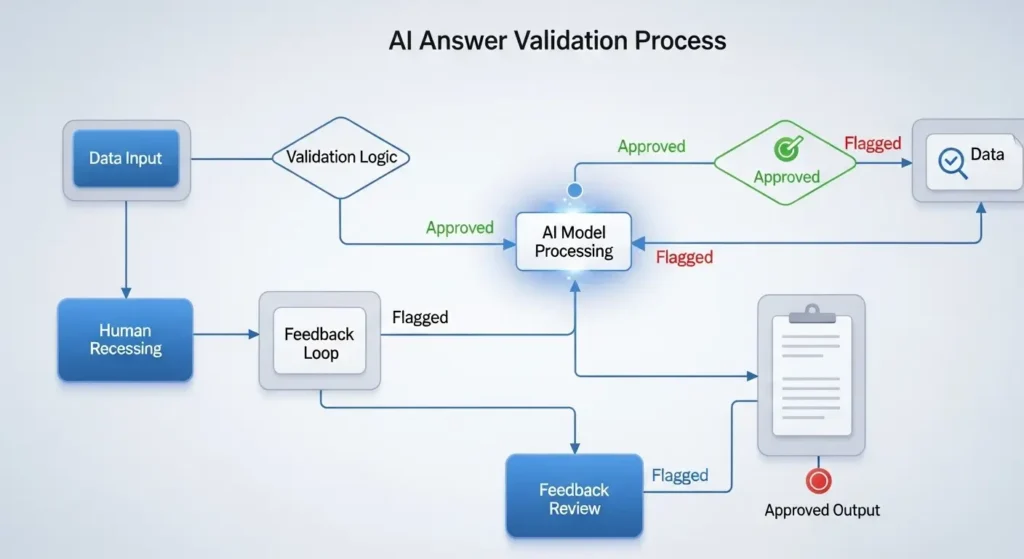

How the AI Answer Validation Process Works

The validation pipeline for AI answers typically flows through several distinct phases. Here is a step by step breakdown of how most responsible AI teams approach this:

Phase 1: Benchmark Testing

Before anything else, the model is run against standardized benchmarks. These are curated datasets with known correct answers. Performance on benchmarks gives teams a numerical baseline for accuracy, reasoning ability, and knowledge coverage.

Phase 2: Automated Evaluation

Automated tools check millions of model responses against predefined criteria. This includes checking for factual consistency, detecting toxic or harmful language, flagging hallucinations, and verifying that the model follows formatting rules. Tools like G Eval, MT Bench, and custom classifiers are common here.

Selecting the right tooling at this phase has a significant impact on how many failure modes get caught early. A review of the best AI content evaluator tools for quality, accuracy, and compliance shows how platforms like Scale AI, Galileo, and similar services handle accuracy scoring, bias detection, and compliance monitoring at scale within modern validation pipelines.

Phase 3: Human Evaluation

Human reviewers, often called raters or annotators, score model responses on dimensions like helpfulness, honesty, harmlessness, and coherence. This phase catches nuances that automated tools often miss, such as subtle sycophancy, cultural insensitivity, or misleading framing.

Phase 4: Red Teaming

A dedicated team attempts to break the model by crafting adversarial prompts. The goal is to find failure modes before bad actors do. Red-teamers try jailbreaks, prompt injections, role-play exploits, and edge cases that normal users would never think to try.

Interestingly, the skills used by professional red teamers overlap significantly with those exercised by search quality raters. Understanding what makes a result accurate, relevant, and trustworthy sits at the core of both roles. Professionals who work as a search engine evaluator develop exactly the kind of analytical judgment that AI validation teams rely on when stress testing model responses against real-world user intent.

Phase 5: Safety and Policy Review

Every response the model can plausibly generate is checked against the organization’s safety policies. This includes checking for content that is illegal, dangerous, or violates terms of service. Policy filters are both rule based and model assisted.

Phase 6: Staged Rollout

Even after passing all internal checks, most teams deploy to a small percentage of users first. This canary deployment lets engineers catch real-world failure modes that labs cannot fully anticipate in controlled testing environments.

| Validation Phase | Method | What It Catches | Speed |

|---|---|---|---|

| Benchmark Testing | Automated datasets | Accuracy, reasoning gaps | Fast |

| Automated Evaluation | Classifiers, scoring models | Toxicity, hallucinations, formatting | Fast |

| Human Evaluation | Rater panels | Nuance, tone, subtle errors | Slow |

| Red-Teaming | Adversarial prompts | Jailbreaks, policy violations | Medium |

| Safety Review | Rule-based + AI filters | Harmful, illegal content | Fast |

| Staged Rollout | Real-world monitoring | Unknown unknowns | Ongoing |

Key Factors in AI Answer Validation

Not all validation is equal. These are the core dimensions that evaluators measure when assessing whether an AI answer is ready for deployment:

- Factual accuracy: Does the answer reflect verified, up-to-date information?

- Coherence: Is the response logically structured and easy to follow?

- Groundedness: For retrieval-augmented systems, does the answer stay within the provided source material?

- Harmlessness: Does the output avoid promoting dangerous, abusive, or illegal behavior?

- Calibration: Does the model express appropriate uncertainty instead of stating guesses as facts?

- Instruction-following: Does the model do what the user actually asked?

- Bias and fairness: Does the model treat different groups equitably across similar prompts?

Real Examples of AI Validation Frameworks

OpenAI and RLHF

OpenAI pioneered Reinforcement Learning from Human Feedback (RLHF) as a core validation tool. Human raters rank model outputs, and those rankings train a reward model that guides further fine-tuning. This loop has been central to the improvement of the GPT series.

Anthropics Constitutional AI

Anthropic uses a technique where the model itself critiques and revises its own answers based on a written set of principles called a constitution. This self-critique loop, combined with human oversight, allows for scalable safety validation without requiring human review of every single response.

Google DeepMind and Sparrow

DeepMind’s Sparrow project demonstrated rule-based reward models where human raters flag responses that violate predefined rules. This approach shows how structured rule sets can be embedded directly into the validation reward signal during training.

| Organization | Primary Validation Approach | Key Strength | Known Limitation |

|---|---|---|---|

| OpenAI | RLHF with human raters | Strong alignment with user preferences | Rater subjectivity and cost |

| Anthropic | Constitutional AI + RLHF | Scalable self-critique loop | Constitution design requires care |

| Google DeepMind | Rule-based reward models | Transparent, auditable rules | Rigid rules miss novel scenarios |

| Meta AI | Benchmark-heavy evaluation | Fast and reproducible | Benchmarks can be gamed or saturated |

Common Mistakes in AI Answer Validation

Even experienced teams make errors in their validation processes. Here are the most common pitfalls:

Over relying on benchmarks

Benchmark scores look impressive but they do not capture real-world complexity. A model that scores 90% on MMLU may still hallucinate confidently when a user asks a niche industry question. Benchmarks should be a starting point, never the final word.

Neglecting edge cases

Most validation focuses on typical inputs. But users are unpredictable. Failing to test unusual, ambiguous, or adversarial prompts leaves dangerous blind spots that only surface after deployment.

Insufficient diversity in human raters

If the team reviewing AI answers is demographically homogenous, they will miss failures that affect underrepresented groups. Diverse evaluator panels produce more robust safety coverage.

Treating validation as a one time event

Model behavior can drift over time, especially if the model continues to learn from user feedback. Validation must be ongoing, with regular re-evaluations triggered by model updates or shifts in user behavior.

Ignoring distribution shift

The prompts used in internal testing often look very different from what real users send. This gap, called distribution shift, means validation results in the lab may not predict real-world performance accurately.

Best Practices for Validating AI Answers Before Deployment

For teams building or deploying AI systems, these practices consistently produce better validation outcomes:

- Build a diverse eval suite that covers factual QA, reasoning tasks, creative tasks, and adversarial prompts.

- Use model-based evaluation (LLM as judge) alongside human evaluation to scale coverage without sacrificing quality signals.

- Run structured red team exercises at least once per major release, with participants outside the core development team.

- Track regressions across versions using the same held out test sets so you can detect when new training degrades old capabilities.

- Log real user interactions with appropriate consent and use them to continuously update and expand your validation datasets.

- Set minimum passing thresholds for each safety and quality dimension before any deployment is authorized.

- Document all validation findings, including failures, so future teams can learn from them.

| Practice | Benefit | Who It Applies To |

|---|---|---|

| LLM-as-judge scoring | Scales evaluation without large rater teams | Teams with limited human review budget |

| Diverse eval suites | Covers more failure modes across domains | All AI teams |

| Regression testing | Prevents quality degradation across versions | Teams shipping frequent model updates |

| Red-teaming by external parties | Fresh perspectives find novel attack vectors | High-stakes or public-facing deployments |

| Post-deployment monitoring | Catches real-world failures missed in testing | All deployed AI products |

Conclusion

AI answers validation before deployment is not a luxury. It is the foundation of trustworthy AI. As these systems take on more important roles in healthcare, education, finance, and everyday decision-making, the rigor of the validation process directly determines how much users can rely on what they are told.

The field is evolving quickly. Techniques like constitutional AI, LLM as judge evaluation, and adversarial red-teaming represent meaningful progress, but there is still no perfect system. The most responsible approach is continuous validation, combining automated scale with irreplaceable human judgment, and treating deployment as the beginning of the process, not the end.

Key Takeaways

- AI answers validation is a multi phase process covering benchmarks, human review, red teaming, and staged rollout.

- Automated evaluation scales coverage, but human raters remain essential for catching nuanced failures.

- Red-teaming by diverse teams outside the core development group finds failure modes that internal tests miss.

- Common mistakes include over-relying on benchmarks, neglecting edge cases, and treating validation as a one-time event.

- Post-deployment monitoring is a required extension of pre-deployment validation, not an optional add-on.

- Leading organizations like Anthropic, OpenAI, and DeepMind each use distinct but complementary validation frameworks.

- The goal of validation is not just safety, but sustained accuracy, fairness, and trustworthiness across diverse user populations.

FAQs

1.What does AI answers validation before deployment actually involve?

It involves a combination of automated testing, human review, red teaming, safety filtering, and staged rollouts. The goal is to ensure the AI produces accurate, helpful, and safe responses across a wide range of inputs before real users encounter it.

2.How long does AI validation take before a model is deployed?

Timelines vary widely. A small fine-tuned model might go through validation in weeks. A major foundational model from a company like Anthropic or OpenAI can take months of iterative testing, red-teaming, and staged evaluation before public release.

3.Can automated tools replace human evaluators in AI validation?

Not entirely. Automated tools handle scale but miss subtlety. Human evaluators catch nuanced tone issues, cultural sensitivity failures, and sycophantic behaviors that classifiers often overlook. The best validation pipelines combine both.

4.What is hallucination in AI and how is it caught during validation?

Hallucination is when an AI confidently generates false information. It is caught through fact-checking pipelines that compare model outputs against verified sources, as well as through human rater review and grounding checks in retrieval augmented systems.

5.What happens if an AI answer fails validation?

The model goes back for further fine tuning, safety training, or filtering adjustments. Deployment is blocked until minimum quality and safety thresholds are met. In some cases, specific capabilities are restricted or disabled rather than delaying the whole release.

6.Is AI validation the same as AI alignment?

They are related but not identical. Alignment is the broader goal of making AI systems behave according to human values and intentions. Validation is one of the practical methods used to measure and improve alignment before a model ships.