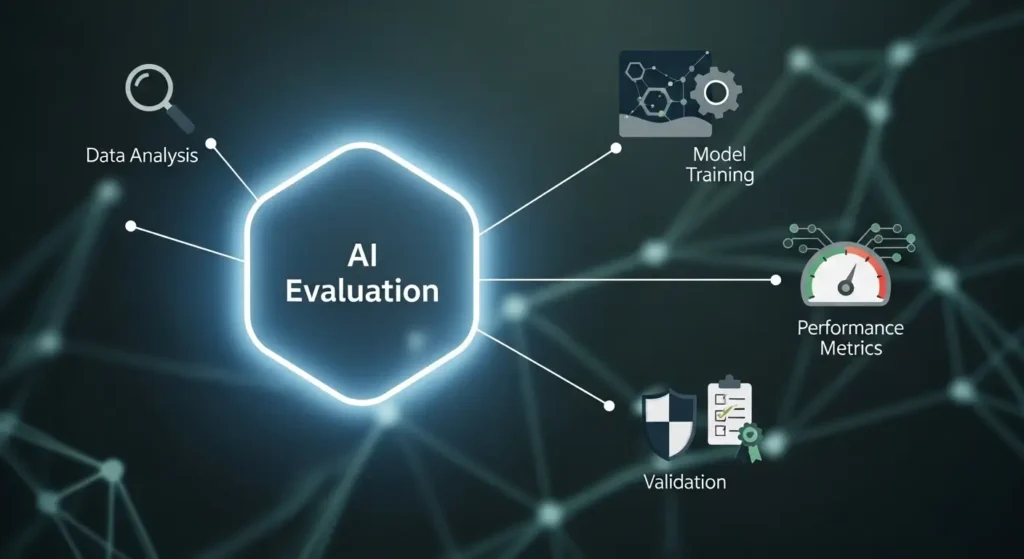

The real AI evaluation process is the structured method used to test, measure, and improve artificial intelligence models using human feedback, benchmark data, and performance metrics. It ensures that AI systems produce accurate, safe, and useful outputs before and after deployment.

In simple terms, the real AI evaluation process checks how well an AI system understands, responds, and performs in real-world situations. It combines automated testing with human judgment to improve quality, reduce errors, and build trust in AI systems used in businesses, apps, and decision-making tools.

Real AI Evaluation Process

The real AI evaluation process is a continuous cycle of testing, feedback, and improvement used to measure how effectively an AI model performs.

It focuses on three main areas:

- Accuracy of responses

- Safety and compliance

- User satisfaction and usefulness

Unlike simple testing, this process does not end after deployment. AI systems are evaluated continuously as they interact with real users and new data.

Key Goal

The goal is to make AI outputs:

- Relevant

- Reliable

- Safe

- Human-like in understanding

How the Real AI Evaluation Process Works

The real AI evaluation process follows a structured pipeline. Understanding the critical stages of testing AI responses can help you see exactly how each step builds on the last to ensure the model improves over time.

Step by Step Breakdown

| Stage | What Happens | Why It Matters |

|---|---|---|

| Data Preparation | Clean and label training data | Ensures quality inputs |

| Model Training | AI learns patterns from data | Builds initial intelligence |

| Automated Testing | Benchmarks and metrics applied | Measures performance quickly |

| Human Evaluation | Humans review outputs | Adds real-world judgment |

| Feedback Loop | Corrections are fed back | Improves future responses |

| Continuous Monitoring | Live performance tracked | Keeps AI reliable over time |

Simple Explanation

- AI is trained on data

- It generates outputs

- Humans and systems evaluate those outputs

- Feedback improves the model

This loop continues again and again.

Key Factors in the Real AI Evaluation Process

Several important factors define how strong an AI evaluation process is.

1. Accuracy

Measures how correct the AI responses are.

- Does it answer the question properly

- Does it avoid hallucinations

2. Relevance

Checks if the response matches user intent.

- Is the answer useful

- Does it solve the problem

3. Safety

Ensures the AI avoids harmful or misleading content. Research into the best ways AI evaluation helps reduce bias shows how targeted evaluation methods directly prevent skewed or unsafe outputs from reaching users.

- No biased outputs

- No unsafe recommendations

4. Consistency

AI should provide stable results across similar queries.

5. Human Alignment

AI must behave in a way that aligns with human expectations and values.

Types of AI Evaluation Methods

Different methods are used in the real AI evaluation process.

Comparison Table

| Method | Description | Use Case |

|---|---|---|

| Automated Evaluation | Uses metrics like accuracy and BLEU score | Fast testing at scale |

| Human Evaluation | Real people rate responses | Quality and usefulness |

| A/B Testing | Compare two versions of AI | Product improvement |

| Reinforcement Learning | AI learns from feedback | Continuous improvement |

Real Example of the Real AI Evaluation Process

Let’s look at a simple real world scenario.

Scenario: Chatbot for Customer Support

A company launches an AI chatbot to handle customer queries.

What Happens Behind the Scenes

- The chatbot answers customer questions

- Users interact and give implicit feedback

- Human reviewers check conversations

- Errors are identified

- The system is retrained

Example Issues Found

- Wrong answers to billing questions

- Slow response time

- Irrelevant suggestions

Improvements Made

- Updated training data

- Added better intent detection

- Improved response templates

This is the real AI evaluation process in action.

Human Role in the Real AI Evaluation Process

Human input is critical. In fact, studies into ways human feedback makes AI models more accurate highlight exactly why this human layer is irreplaceable in building systems users can truly trust.

AI cannot fully evaluate itself because:

- It lacks real world judgment

- It cannot fully understand context

What Human Evaluators Do

- Rate response quality

- Identify harmful content

- Check clarity and tone

- Compare multiple outputs

Human Rating Criteria

| Criteria | What It Measures |

|---|---|

| Helpfulness | Does it solve the problem |

| Accuracy | Is it factually correct |

| Clarity | Is it easy to understand |

| Safety | Is it appropriate |

Common Mistakes in AI Evaluation

Many teams fail to build a strong evaluation system.

Common Problems

- Relying only on automated metrics

- Ignoring user feedback

- Not updating models regularly

- Testing only in controlled environments

- Lack of human review

Why These Mistakes Matter

They lead to:

- Poor user experience

- Incorrect outputs

- Loss of trust

Best Practices for the Real AI Evaluation Process

To build a strong system, follow these proven practices.

1. Combine Human and Automated Evaluation

Do not rely on one method.

2. Use Real User Data

Evaluate based on real interactions.

3. Create Clear Evaluation Guidelines

Define what good output looks like.

4. Monitor Performance Continuously

AI must be evaluated even after launch.

5. Focus on User Intent

Always check if the AI solves the user’s problem.

Advanced Concepts in AI Evaluation

As AI grows, evaluation becomes more advanced.

Reinforcement Learning from Human Feedback

AI learns from human ratings to improve responses over time.

Benchmark Testing

Models are tested against standard datasets.

Real Time Evaluation

AI systems are monitored live to detect issues instantly.

Real AI Evaluation Process vs Traditional Testing

| Aspect | Traditional Testing | Real AI Evaluation Process |

|---|---|---|

| Frequency | One time testing | Continuous evaluation |

| Focus | Code correctness | Output quality |

| Feedback | Developer based | Human and user based |

| Adaptability | Static | Dynamic and evolving |

Conclusion

The real AI evaluation process is what turns an average AI system into a reliable and useful one. It is not just about testing outputs once, but about continuously improving the model through human feedback real user interactions, and performance tracking. By combining automated metrics with human judgment, businesses can ensure their AI delivers accurate, relevant, and safe responses in real world situations.

In the long run, strong evaluation systems build trust, improve user experience, and reduce errors. Companies that focus on the real AI evaluation process are able to adapt faster, improve quality over time, and create AI solutions that truly solve user problems instead of just generating responses.

Key Takeaways

- Strong evaluation leads to better AI performance and trust

- The real AI evaluation process is continuous, not one time

- It combines automated metrics and human feedback

- Accuracy, safety, and relevance are critical factors

- Real user data improves evaluation quality

FAQs

1.What is the real AI evaluation process in simple terms

The real AI evaluation process is the method used to test and improve AI systems by checking their accuracy, relevance, and safety. It combines automated metrics and human feedback to ensure the AI gives useful and correct answers.

2.Why is the real AI evaluation process important

The real AI evaluation process is important because it helps AI systems produce reliable and safe outputs. Without proper evaluation, AI can give incorrect or misleading responses, which can reduce user trust.

3.How does the real AI evaluation process work

The real AI evaluation process works in a cycle. AI generates responses, those responses are tested using metrics and human review, feedback is collected, and then the model is improved based on that feedback.

4.What are the key factors in the real AI evaluation process

The key factors include accuracy, relevance, safety, consistency, and human alignment. These factors ensure that AI responses are correct, helpful, and appropriate for users.

5.What is the role of human feedback in AI evaluation

Human feedback plays a critical role in the real AI evaluation process. Humans review AI outputs to check quality, detect errors, and provide insights that automated systems cannot capture.

6.What tools are used in the real AI evaluation process

Common tools include benchmark datasets, human rating platforms, analytics dashboards, and performance metrics systems. These tools help measure and improve AI performance.