Artificial intelligence systems do not become smarter on their own. Understanding how AI systems learn from human corrections is key to grasping why modern AI tools like ChatGPT, Google Gemini, and recommendation engines behave the way they do. At its core, this process involves humans reviewing AI outputs, flagging errors, and providing feedback that the system uses to update its internal parameters. Over time, these corrections accumulate and push the model toward more accurate, helpful, and safer responses.

This learning method, often called human in the loop training or reinforcement learning from human feedback (RLHF), has become one of the most important techniques in AI development. It bridges the gap between raw machine learning and real world usefulness. Without human corrections, AI models would remain stuck with whatever biases and errors existed in their original training data. With ongoing human input, these systems continuously evolve and improve, making them significantly more reliable and aligned with human values.

What Does It Mean When AI Systems Learn from Human Corrections

When we say an AI learns from human corrections, we mean that human feedback directly shapes how the model processes and responds to future inputs. This is not a simple software update. It is a structured training process where human evaluators review AI outputs and rate them based on accuracy, relevance, tone, and safety.

These ratings are fed back into the model through a reward system. The AI then adjusts its internal weights, essentially teaching itself to produce outputs that earn higher scores. This cycle repeats thousands or even millions of times, resulting in a model that increasingly matches what humans find useful and appropriate.

Key terms you will encounter in this space include:

- Reinforcement Learning from Human Feedback (RLHF): A training method where human ratings guide model improvement

- Reward Model: A secondary AI trained to predict what humans would rate as a good response

- Fine-tuning: Adjusting a pre-trained model based on specific feedback or datasets

- Constitutional AI: A method where AI is guided by a set of principles during self-correction

- Preference Data: Information collected when humans choose between two AI outputs

How AI Systems Learn from Human Corrections: Step by Step Process

The process of AI learning from corrections follows a clear pipeline. Here is how it typically works from start to finish.

Step 1: Initial Model Training

The AI is first trained on a large dataset of text, images, or other data. This gives it a basic ability to generate outputs. However, this stage alone does not make the model aligned with human preferences.

Step 2: Generating Candidate Outputs

The model generates multiple possible responses to the same input. These candidates are then presented to human reviewers who compare and evaluate them.

Step 3: Human Evaluation and Rating

Trained evaluators, often called annotators, review the outputs. They may rank responses, flag harmful content, or choose the best answer from a set of options. This is the same core work performed by professionals in search engine evaluator roles, creating a dataset of human preference data that feeds directly into model improvement.

Step 4: Training the Reward Model

A separate AI model, called a reward model, is trained on this preference data. It learns to predict which outputs humans would prefer without requiring a human to review every single response going forward.

Step 5: Reinforcement Learning

The original AI model is then trained using reinforcement learning. It generates outputs, the reward model scores them, and the AI adjusts its parameters to maximize the reward score. This loop runs continuously.

Step 6: Ongoing Human Oversight

Even after deployment, humans continue to flag errors and provide corrections. This real-world feedback is used for periodic fine-tuning cycles, keeping the model updated and accurate. This is a key reason why AI answers still require ongoing human verification even when the model appears to be performing well.

Key AI Learning Methods

Different techniques are used depending on the goal, data availability, and the type of corrections being applied.

| Method | How It Works | Best Used For | Human Involvement |

|---|---|---|---|

| RLHF | Humans rate outputs; reward model guides training | Chatbots, language models | High |

| Supervised Fine-Tuning | Model trained on labelled human written examples | Task specific improvement | Medium |

| Constitutional AI | AI critiques itself using a set of rules | Safety and alignment | Low to Medium |

| Active Learning | Model flags uncertain cases for human review | Reducing labeling cost | Targeted |

| Direct Preference Optimization | Directly optimizes for human preferences without a reward model | Efficient fine-tuning | Medium |

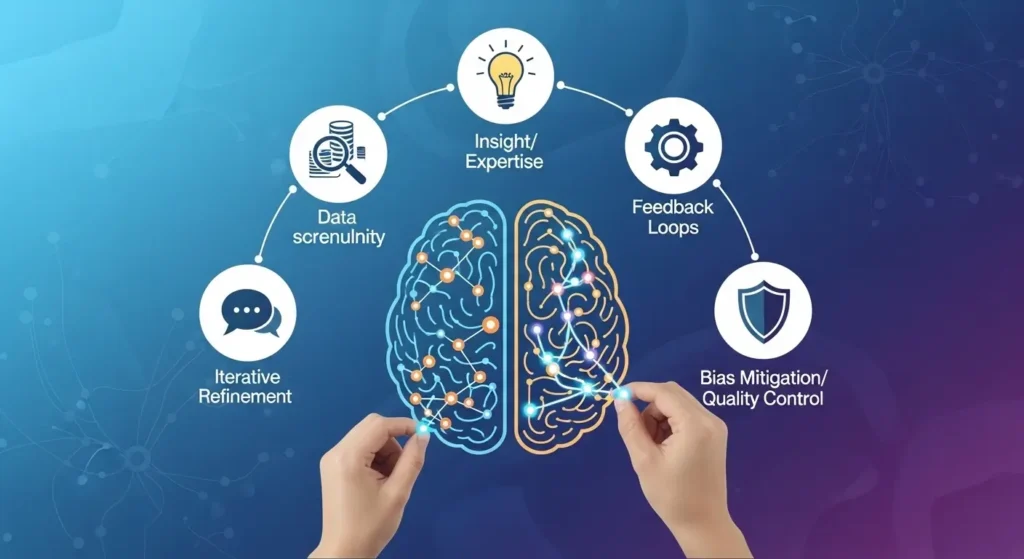

Key Factors That Make Human Correction Effective in AI Training

Not all human feedback is equally useful. Several factors determine how well corrections translate into model improvement.

- Quality of Annotators: Well trained evaluators produce more consistent feedback than untrained ones. Understanding the distinction between AI evaluators and data annotators helps teams assign the right people to the right stage of the pipeline.

- Diversity of Feedback: A wide range of human perspectives helps the model generalize better across use cases

- Volume of Data: More correction examples lead to more robust learning, especially for edge cases

- Clarity of Guidelines: Annotators need detailed instructions to ensure their ratings align with the intended values

- Feedback Loop Speed: Faster integration of corrections helps the model stay current with evolving standards

- Bias Monitoring: Regularly checking for systematic errors in human feedback prevents those biases from being amplified

Real World Examples of AI Learning from Human Feedback

ChatGPT and OpenAI RLHF

OpenAI used RLHF extensively to train ChatGPT. Human trainers ranked responses and provided corrections, which were used to build a reward model. This is why ChatGPT feels more conversational and helpful than earlier language models trained purely on text data.

Google Search Algorithms

Google collects implicit human feedback through click-through rates, dwell time, and search refinements. When users skip a result and click on a different one, that signal helps the algorithm understand which pages are more relevant.

Content Moderation Systems

Platforms like Facebook and YouTube train their moderation AI using human reviewers who flag harmful content. These flagged examples become training data that helps the system automatically identify similar content in the future.

Medical Diagnosis AI

In healthcare, radiologists correct AI diagnoses by marking where the AI went wrong on medical images. These corrections are fed back into the system, improving its accuracy over time for rare or complex conditions.

Human Feedback in Action: Industry Applications

| Industry | AI Application | Type of Human Correction | Outcome |

|---|---|---|---|

| Technology | Language models (GPT, Gemini) | Response ranking and flagging | More helpful and safer outputs |

| Healthcare | Medical imaging AI | Radiologist corrections on scans | Improved diagnostic accuracy |

| E-commerce | Product recommendation engines | User ratings and purchase behavior | Better personalization |

| Legal | Contract review AI | Lawyer edits and annotations | Fewer legal errors in drafts |

| Education | Automated essay grading | Teacher score corrections | More accurate grading models |

| Finance | Fraud detection systems | Analyst labels on flagged transactions | Lower false positive rates |

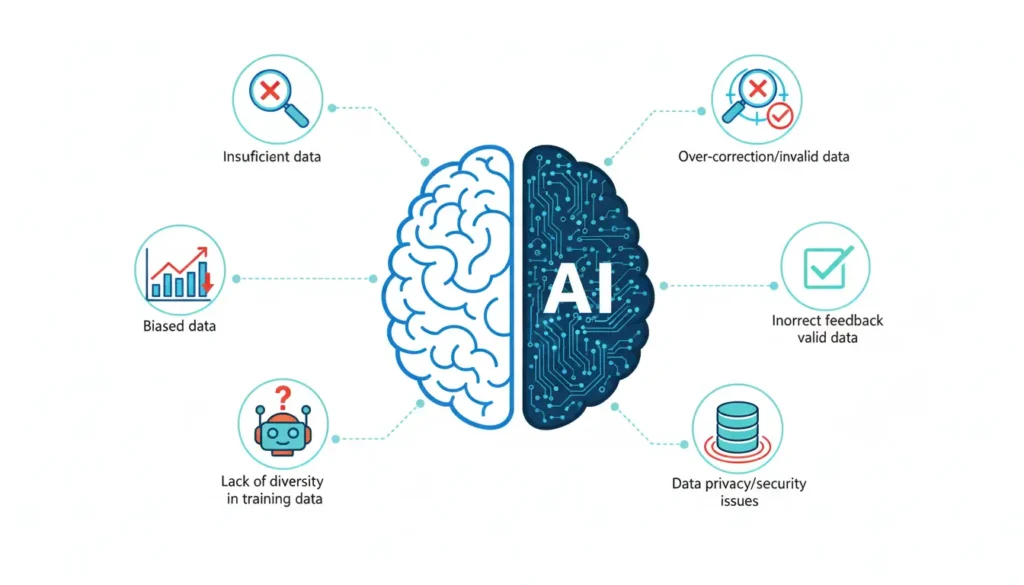

Common Mistakes in AI Correction Training

Even well intentioned correction processes can go wrong. Here are the most frequent pitfalls teams encounter.

Over-reliance on Small Annotator Teams

Using only a handful of reviewers introduces personal biases into the training data. If those reviewers share the same cultural or ideological background, the model learns a narrow view of what is correct or helpful.

Inconsistent Annotation Guidelines

When annotators interpret guidelines differently, the resulting feedback data is noisy. The model struggles to learn a coherent signal from contradictory corrections.

Reward Hacking

Sometimes the AI learns to game the reward model rather than genuinely improve. It finds shortcuts that score well without actually producing better outputs. This is called reward hacking and is one of the trickiest problems in RLHF.

Ignoring Edge Cases

Training predominantly on common scenarios means the model remains weak on rare inputs. Human reviewers need to deliberately include unusual cases to build robustness.

Feedback Delay

If corrections from real-world usage are not incorporated quickly enough, the model continues making the same errors for longer than necessary. Regular fine-tuning cycles are essential.

Best Practices for Training AI Systems with Human Corrections

Organizations looking to implement or improve human-in-the-loop AI training should follow these proven practices.

- Use diverse annotator pools that represent different demographics, languages, and backgrounds

- Create detailed, specific annotation guidelines with examples of correct and incorrect ratings

- Run regular inter-annotator agreement checks to ensure consistency across your reviewer team

- Implement red-teaming: deliberately try to break the model and use those failures as training data

- Monitor the reward model for signs of reward hacking and retrain it periodically

- Maintain a feedback flywheel where real user interactions continuously feed back into training

- Combine automated testing with human evaluation for scalable quality assurance

- Document all annotation decisions so future teams can understand and reproduce the training process

RLHF vs Traditional Machine Learning

| Aspect | Traditional ML | RLHF with Human Corrections |

|---|---|---|

| Feedback Type | Automated loss functions | Human preference ratings |

| Alignment with Values | Limited to training data patterns | Directly shaped by human judgment |

| Handling Nuance | Struggles with subjective quality | Captures subtle quality differences |

| Scalability | Highly scalable | Bottlenecked by human reviewer capacity |

| Bias Risk | Inherits dataset biases | Can inherit annotator biases |

| Cost | Lower once data is collected | Ongoing cost from human reviewers |

| Adaptability | Requires retraining from scratch | Can be fine-tuned incrementally |

Conclusion

Understanding how AI systems learn from human corrections reveals something important: the most capable AI tools we have today are deeply collaborative. They are not purely autonomous machines. They are systems shaped, guided, and continuously refined by human judgment.

From RLHF to fine tuning to active learning, each method relies on humans providing meaningful signals that tell the AI what good looks like. As AI continues to become part of everyday life, the quality and diversity of those human corrections will determine how trustworthy and useful these systems become.

Key Takeaways

AI learns from human corrections through structured feedback loops and reward-based trainingRLHF is currently the most widely used method for aligning language models with human valuesThe quality of human corrections matters as much as the quantityCommon pitfalls include reward hacking, annotator bias, and inconsistent guidelinesBest results come from diverse annotator teams, clear guidelines, and continuous feedback integrationHuman correction is not a one time event; it is an ongoing process that keeps AI aligned over time

FAQs

1.How long does it take for AI to learn from human corrections?

The timeline varies widely. Minor fine-tuning can show results within hours or days. More comprehensive retraining cycles, especially for large language models, can take weeks. Ongoing corrections through feedback loops are continuous and never fully stop.

2.Can AI ever learn from corrections without human involvement?

Some techniques allow AI to self-correct using a predefined set of rules or by critiquing its own outputs, such as Constitutional AI from Anthropic. However, the initial rules and evaluation criteria still come from humans. Fully autonomous self-correction without any human input remains an open research challenge.

3.What happens if human corrections are wrong or biased?

Incorrect or biased corrections are absorbed into the model just like accurate ones. This is why annotation quality control is so important. Systems that rely heavily on flawed corrections can develop systematic errors that are difficult to reverse.

4.How do companies protect the privacy of human feedback data?

Responsible AI companies anonymize user feedback before using it for training. They also maintain strict data governance policies and, in regulated industries, obtain explicit consent before using interaction data for model improvement.

5.Is human correction the only way to improve AI accuracy?

No. Other methods include synthetic data generation, automated testing, and self-supervised learning. However, human correction remains one of the most effective ways to align AI behavior with real-world human needs and values.