Artificial Intelligence (AI) has become a critical force driving innovation across industries, from healthcare and finance to marketing and manufacturing. However, the success of any AI system depends heavily on how well it is trained and evaluated. An AI training evaluator plays a pivotal role in this process by assessing model performance, identifying errors, detecting biases, and providing insights for optimization. By continuously monitoring AI models, these evaluators ensure that predictions are accurate, reliable, and aligned with real-world requirements, ultimately bridging the gap between development and deployment.

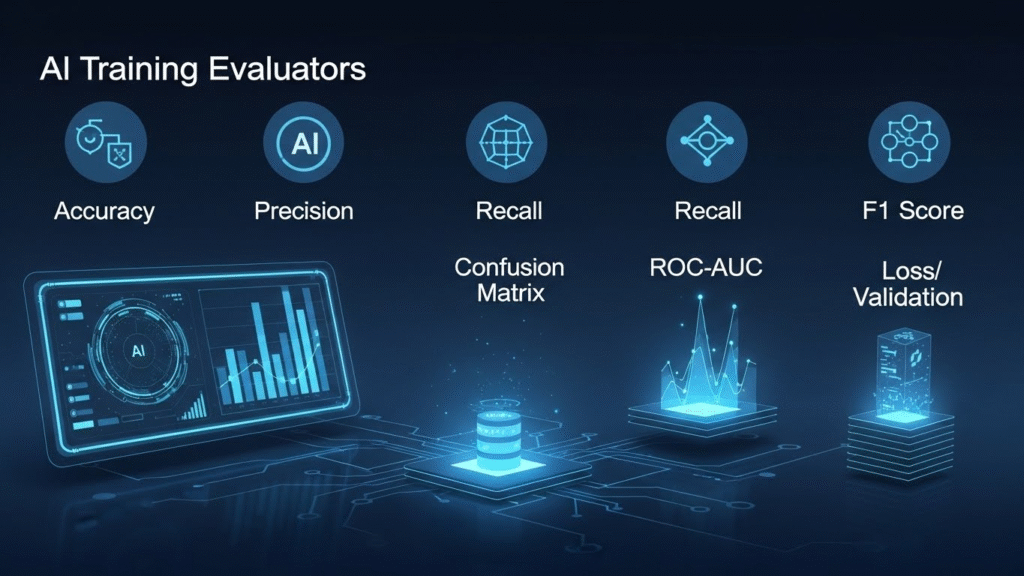

Using the right evaluation metrics, such as accuracy, precision, recall, F1 score, and ROC-AUC, AI developers can measure strengths and weaknesses across datasets, identify areas for improvement, and refine models for better results. In addition, combining automated tools with expert human oversight ensures robust, scalable, and context-aware evaluation. With the increasing reliance on AI in critical applications, implementing a comprehensive AI training evaluation strategy has become essential for improving performance, maintaining fairness, and ensuring ethical compliance.

What is an AI Training Evaluator?

An AI training evaluator is a system or framework designed to assess the performance of AI models during and after the training process. Its primary goal is to measure how well a model can learn from data, adapt to new inputs, and make accurate predictions.

Key Functions of AI Training Evaluators

Performance Assessment Evaluators measure how well the AI model predicts outcomes on both training and validation data. This helps determine whether the model is learning correctly and if it can generalize to new, unseen data. It provides a clear view of the model’s strengths and weaknesses.

Error Analysis By analyzing where the model makes mistakes, evaluators help identify patterns of errors, such as misclassified inputs or inconsistent predictions. This insight guides developers on how to correct issues and improve model accuracy.

Bias Detection Evaluators check if the AI is unfairly favouring certain groups or outcomes. Detecting bias ensures decisions are ethical and equitable, which is crucial in sensitive applications like hiring or healthcare.

Optimization Guidance They provide actionable insights for fine-tuning hyperparameters, adjusting algorithms, or improving training data. This ensures the model achieves the best possible performance efficiently.

Compliance & Reporting Evaluators document results and maintain transparency. This helps organizations meet regulatory standards, ethical guidelines, and provides proof of AI reliability for audits or stakeholders.

AI training evaluators are critical because a poorly trained AI model can lead to inaccurate predictions, flawed decision-making, and operational risks.

Importance of AI Training Evaluators in AI Development

An AI model without proper evaluation is like navigating a ship without a compass. Here’s why AI training evaluators are indispensable:

Improved Accuracy AI training evaluators continuously monitor model predictions, helping developers identify errors and fine-tune algorithms. This iterative feedback ensures the model becomes more precise over time, delivering reliable and consistent results.

Early Detection of Issues Evaluators can spot problems such as overfitting, underfitting, or data inconsistencies early in the development process. Detecting these issues before deployment saves time, resources, and prevents performance failures in real-world scenarios.

Bias and Fairness Assessment By analyzing predictions across different groups or categories, evaluators ensure AI systems operate fairly. This is crucial for avoiding discrimination in applications like hiring, lending, or healthcare.

Resource Efficiency Evaluators help identify ineffective training cycles, reducing wasted computational power and time. Optimizing training processes ensures resources are used efficiently without compromising model quality.

Regulatory Compliance Many industries require AI systems to meet ethical and legal standards. Evaluators document performance and fairness, helping organizations demonstrate compliance with these regulations and industry best practices.

In short, AI training evaluators act as a bridge between model development and practical, real-world deployment.

Core Metrics in AI Training Evaluation

To assess AI performance effectively, evaluators use a combination of metrics. These metrics help quantify the strengths and weaknesses of a model.

1. Accuracy

Accuracy measures the percentage of correct predictions made by the model. While widely used, accuracy alone can be misleading for imbalanced datasets. Accuracy=Correct PredictionsTotal Predictions×100\text{Accuracy} = \frac{\text{Correct Predictions}}{\text{Total Predictions}} \times 100Accuracy=Total PredictionsCorrect Predictions×100

2. Precision

Precision determines how many of the predicted positive results are actually correct. High precision reduces false positives. Precision=True PositivesTrue Positives + False Positives\text{Precision} = \frac{\text{True Positives}}{\text{True Positives + False Positives}}Precision=True Positives + False PositivesTrue Positives

3. Recall

Recall (also called sensitivity) measures how many actual positives the model correctly identified. High recall minimizes false negatives. Recall=True PositivesTrue Positives + False Negatives\text{Recall} = \frac{\text{True Positives}}{\text{True Positives + False Negatives}}Recall=True Positives + False NegativesTrue Positives

4. F1 Score

The F1 score is the harmonic mean of precision and recall, providing a single metric for evaluating the balance between the two. F1 Score=2×Precision×RecallPrecision + Recall\text{F1 Score} = 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision + Recall}}F1 Score=2×Precision + RecallPrecision×Recall

5. ROC-AUC

ROC-AUC (Receiver Operating Characteristic – Area Under Curve) measures the model’s ability to distinguish between classes. A higher AUC indicates better performance.

| Metric | Purpose | Importance |

|---|---|---|

| Accuracy | Measures overall correctness | Useful for balanced datasets |

| Precision | Measures correctness of positive predictions | Reduces false positives |

| Recall | Measures coverage of actual positives | Reduces false negatives |

| F1 Score | Balances precision and recall | Best for imbalanced datasets |

| ROC-AUC | Evaluates model discrimination ability | Shows overall capability in classification tasks |

Types of AI Training Evaluators

AI training evaluators can vary depending on the type of model and the application. Here are the most common types:

1. Automated Evaluators

Automated evaluators use scripts and AI tools to assess models continuously. They are ideal for large datasets and complex models.

Advantages:

- Speed and scalability

- Reduces human error

Disadvantages:

- May overlook subtle context-specific issues

2. Manual Evaluators

Manual evaluation involves human experts reviewing model outputs, especially in natural language processing or creative AI systems.

Advantages:

- Context-aware and nuanced assessment

- Can identify errors automated systems miss

Disadvantages:

- Time-consuming

- Not scalable for massive datasets

3. Hybrid Evaluators

Combines automated metrics with human oversight, ensuring efficiency without sacrificing quality.

Advantages:

- Best of both worlds

- Improves accuracy and reliability

Disadvantages:

- Requires careful integration of tools and expert input

Challenges in AI Training Evaluation

Even with advanced evaluators, several challenges persist:

Data Quality Issues Poor, incomplete, or biased data can mislead AI evaluation, resulting in inaccurate performance metrics. Evaluators may struggle to give reliable insights if the input data does not reflect real-world scenarios.

Overfitting and Underfitting Models may perform well on training data but fail in real-world situations. Evaluators need to detect these issues to ensure the model generalizes effectively and avoids over-reliance on training data.

Dynamic Environments Changing conditions, user behavior, or market trends can affect model performance over time. Evaluators must account for evolving scenarios to maintain model accuracy and relevance.

Computational Costs Evaluating large AI models, especially deep learning networks, requires significant computing resources. High costs can limit the frequency and depth of evaluation if not managed efficiently.

Interpretability Complex models, particularly deep learning ones, can be hard to understand and explain. Evaluators face challenges in interpreting results and providing actionable insights for improvement.

Addressing these challenges is crucial for maintaining consistent AI performance.

Best Practices for Using AI Training Evaluators

To maximize the effectiveness of AI training evaluators, adopt these best practices:

Continuous Evaluation Regularly monitoring AI model performance throughout training helps catch issues early. Continuous evaluation ensures the model adapts effectively and maintains high accuracy over time.

Use Multiple Metrics Relying on a single metric can be misleading, especially with imbalanced datasets. Combining metrics like accuracy, precision, recall, and F1 score provides a comprehensive view of model performance.

Validate with Real-World Data Evaluators should use datasets that reflect actual deployment conditions. This ensures the model performs reliably outside the controlled training environment.

Incorporate Feedback Loops Integrating evaluation results back into the training process allows continuous improvement. Feedback loops help fine-tune models and enhance prediction quality over time.

Document Evaluation Results Keeping detailed records of evaluation outcomes ensures transparency and accountability. Documentation also supports regulatory compliance and helps teams track improvements or issues systematically.

Popular Tools for AI Training Evaluation

Several tools streamline the evaluation process, each with unique features:

| Tool Name | Features | Best For |

|---|---|---|

| TensorBoard | Visualizes training metrics, loss, accuracy | Deep learning model evaluation |

| MLflow | Tracks experiments and model performance | Model lifecycle management |

| Weights & Biases (W&B) | Provides dashboards, collaboration | Team-based model evaluation and tracking |

| Scikit-learn | Provides metrics and tools for ML models | Traditional machine learning evaluation |

| Keras Callbacks | Customizable evaluation during training | Neural networks and deep learning |

Using these tools, AI developers can automate evaluations, visualize results, and make data-driven improvements.

How to Improve AI Performance Using Evaluators

AI training evaluators do not just measure performance they provide actionable insights to enhance AI systems. Here’s how:

Hyperparameter Tuning Evaluators help identify the optimal settings for learning rate, batch size, and other parameters. Adjusting these hyperparameters based on evaluation results improves model accuracy and efficiency.

Feature Engineering By analyzing evaluation results, developers can determine which features contribute most to prediction errors. Refining, adding, or removing features enhances model performance and prediction reliability.

Data Augmentation Evaluators reveal gaps or imbalances in training data. Using techniques like oversampling, rotation, or synthetic data generation helps the model generalize better to new inputs.

Model Architecture Optimization Insights from evaluation highlight weaknesses in model structure. Modifying layers, nodes, or activation functions based on feedback can significantly boost predictive performance.

Continuous Retraining AI models may degrade over time as data patterns evolve. Evaluators guide periodic retraining, ensuring the model remains accurate, adaptive, and effective in changing environments.

Case Study: AI Training Evaluation in Practice

Consider a healthcare AI system predicting patient readmissions. Using a robust AI training evaluator:

1.Precision Improvement

Evaluators helped the healthcare AI system reduce false positives by carefully analyzing prediction errors. This ensured that only patients with genuine readmission risks were flagged, preventing unnecessary medical interventions and reducing operational workload for doctors and nurses.

2.Recall Enhancement

Evaluation revealed areas where the model was missing high-risk patients. By improving recall, the system became better at identifying those who truly needed attention, resulting in more accurate care decisions and preventing avoidable hospital readmissions.

3.Bias Detection

Evaluators checked model predictions across different patient groups such as age, gender, or medical history—to ensure no demographic was being unfairly classified. This improved fairness in healthcare delivery and avoided biased risk assessments.

4.Continuous Optimization

Using evaluator feedback, data scientists fine-tuned model hyperparameters and adjusted training data. These improvements gradually boosted performance, leading to more reliable and trustworthy predictions over time.

5.Measurable Performance Gains

After applying evaluation insights, the healthcare facility achieved an 18% improvement in model accuracy. This demonstrated how structured evaluation not only improves prediction quality but also directly contributes to better real-world outcomes.

Future Trends in AI Training Evaluation

1.Explainable AI (XAI)

Future evaluators will focus on making AI decisions more transparent and understandable. Instead of just giving results, AI models will explain why a prediction was made. This builds trust, improves accountability, and helps developers detect issues faster.

2.Real-time Evaluation

AI systems will be monitored continuously in real-time environments. This helps models quickly adapt to new data patterns, detect performance drops instantly, and maintain stable accuracy without delays.

3.AI for AI Evaluation

Advanced AI tools will automatically evaluate other AI models, making the process faster and more efficient. This reduces manual work, minimizes human errors, and supports large-scale evaluation of complex models.

4.Ethical & Bias Audits

Future evaluators will include built-in fairness and ethics auditing tools. These ensure the AI does not discriminate, meets legal standards, and aligns with responsible AI practices for safer deployment.

Conclusion

An AI training evaluator is an essential component of any AI development workflow. It ensures models are accurate, fair, and reliable, mitigating risks associated with poor performance. By understanding evaluation metrics, choosing the right tools, and implementing best practices, organizations can maximize the potential of AI systems.

Investing time and resources in proper AI evaluation not only enhances model performance but also builds trust and ensures compliance with emerging ethical standards. For businesses aiming to leverage AI effectively, a robust evaluation strategy is no longer optional it is essential.

FAQs

1.What is an AI training evaluator?

An AI training evaluator is a tool or system used to assess the performance, accuracy, and reliability of an AI model during its training phase. It helps identify strengths, weaknesses, and areas for improvement to optimize model outcomes.

2.Why is AI training evaluation important?

Evaluation ensures that the AI model performs as expected, avoids overfitting or bias, and delivers reliable predictions. Regular evaluation helps improve accuracy, efficiency, and overall performance before deployment.

3.What metrics are used in AI training evaluation?

Common metrics include accuracy, precision, recall, F1 score, and ROC-AUC. These metrics measure how well the model predicts outcomes, handles imbalanced datasets, and generalizes to unseen data.

4.How often should AI models be evaluated?

AI models should be evaluated throughout the training process—after each epoch or iteration—and also before deployment. Continuous evaluation ensures early detection of issues and better model performance.

5.Can AI training evaluators help reduce bias in models?

Yes. Evaluators can detect patterns of bias in predictions, allowing developers to adjust training data or algorithms to create fairer and more balanced AI models.

6.Are AI training evaluators only for developers?

No. While primarily used by data scientists and AI engineers, business analysts and decision-makers can also use insights from evaluators to understand model performance and make informed choices.

7.What are the challenges of AI training evaluation?

Challenges include handling large datasets, evaluating complex models, dealing with imbalanced or noisy data, and selecting the right metrics for specific AI applications.

8.How can I improve my AI model using an evaluator?

By analyzing evaluator reports, identifying weak areas, fine-tuning hyperparameters, adding better-quality data, and iteratively retraining the model, you can significantly improve AI performance.