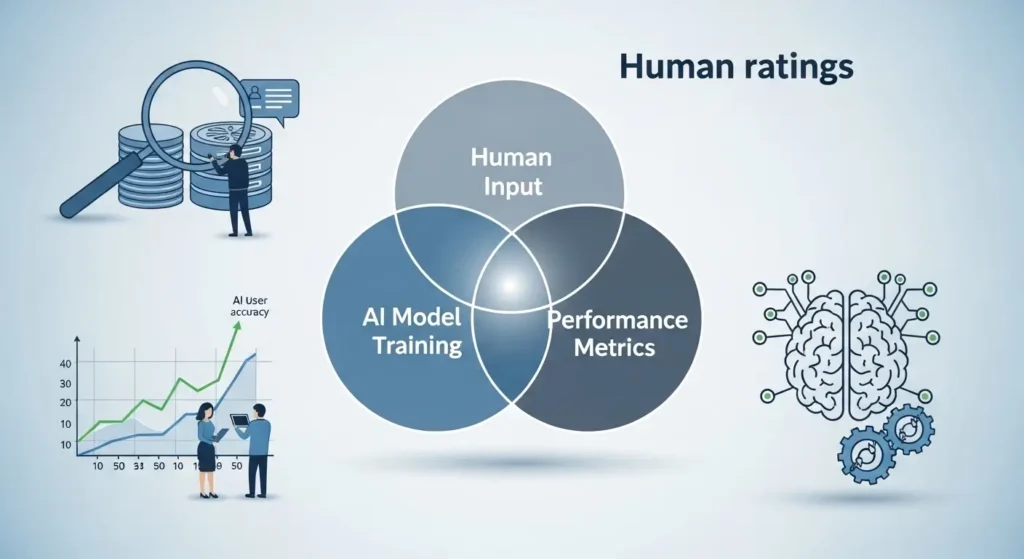

How AI models are improved using human ratings is a process where real people review and score AI responses to make them more accurate, helpful, and safe. These ratings guide the model to learn what good answers look like and what should be avoided. This process is often called human feedback training and is a key part of modern AI systems like chatbots and search assistants.

In simple terms, humans teach AI how to behave better. By rating outputs based on quality, relevance, and safety, AI models adjust their responses over time. This helps them give clearer answers, reduce errors, and align better with human expectations. Without human ratings, AI would struggle to understand what users truly want.

What Is Human Ratings in AI Training

Human ratings in AI training refer to the process where people evaluate AI generated responses and provide feedback.

This feedback usually includes:

• Relevance to the question

• Accuracy of information

• Clarity and usefulness

• Safety and appropriateness

These ratings are then used to refine the model.

This method is widely known as Reinforcement Learning from Human Feedback. It plays a major role in improving modern AI systems.

How AI Models Are Improved Using Human Ratings

Understanding how AI models are improved using human ratings requires looking at a simple loop. Research shows there are human feedback makes AI models more accurate, ranging from reducing hallucinations to improving tone and context awareness.

Step by Step Process

- AI generates a response

- Human reviewers rate the response

- Ratings are collected and analyzed

- The model updates based on feedback

- The cycle repeats to improve performance

Simplified Workflow Table

| Step | Action | Purpose |

|---|---|---|

| 1 | AI generates output | Initial response |

| 2 | Human reviews output | Evaluate quality |

| 3 | Rating assigned | Score performance |

| 4 | Model learns from ratings | Improve accuracy |

| 5 | Repeat process | Continuous improvement |

Key Factors That Influence Human Ratings

Not all feedback is equal. Certain factors strongly influence how effective the improvement process is. Chief among these is the role of human judgment in AI trust scoring, which determines how well a model learns to distinguish reliable outputs from flawed ones.

Important Factors

• Quality of human reviewers

• Clear rating guidelines

• Diversity of feedback

• Consistency in scoring

• Volume of training data

Rating Criteria Table

| Factor | Description | Impact on AI |

|---|---|---|

| Accuracy | Correct information | Reduces errors |

| Relevance | Matches user intent | Improves usefulness |

| Clarity | Easy to understand | Enhances readability |

| Safety | Avoid harmful content | Builds trust |

Types of Human Feedback Used in AI

There are different ways humans provide ratings to AI systems.

Common Feedback Types

• Ranking responses from best to worst

• Giving scores based on quality

• Writing corrections or better answers

• Flagging harmful or incorrect content

Feedback Comparison Table

| Feedback Type | Example | Benefit |

|---|---|---|

| Ranking | Choose best response | Helps model prioritize |

| Scoring | Rate from 1 to 5 | Quantifies quality |

| Editing | Improve response | Teaches better output |

| Flagging | Mark unsafe content | Improves safety |

Real Examples of AI Improving with Human Ratings

Example 1 Chatbots

Chatbots improve responses by learning from user ratings.

If users consistently rate certain answers as helpful, the AI starts generating similar responses more often.

Example 2 Search Engines

AI powered search tools use human feedback to rank better answers.

They learn which results users find useful and adjust rankings accordingly.

Example 3 Content Generation

AI writing tools improve tone and clarity based on human corrections.

Editors refine outputs and the model learns to produce better content over time.

Why Human Ratings Are Important for AI

Human ratings act as a bridge between machine learning and real world expectations. There are at least AI models still need human reviewers even as automation advances, including the need to detect cultural nuance, contextual errors, and evolving user intent that machines alone cannot catch.

Key Benefits

• Aligns AI with human values

• Improves accuracy and trust

• Reduces harmful or biased outputs

• Enhances user experience

Without human input, AI would rely only on raw data and patterns, which is not enough for high quality responses.

Common Mistakes in Human Feedback Training

Even though this process is powerful, mistakes can reduce its effectiveness.

Common Issues

• Inconsistent rating standards

• Poor quality reviewers

• Limited feedback diversity

• Bias in human judgment

• Lack of clear instructions

These mistakes can lead to incorrect learning and weaker AI performance.

Best Practices to Improve AI Using Human Ratings

To get the best results, companies follow proven strategies.

Practical Tips

• Train reviewers with clear guidelines

• Use diverse and global feedback sources

• Regularly audit rating quality

• Combine human feedback with automated checks

• Continuously update training datasets

Example Best Practice Flow

| Practice | Result |

|---|---|

| Clear guidelines | Consistent ratings |

| Diverse reviewers | Better generalization |

| Continuous updates | Ongoing improvement |

Challenges in Using Human Ratings

While effective, this method comes with challenges.

Key Challenges

• High cost of human labor

• Time consuming process

• Subjectivity in ratings

• Scaling feedback for large models

Companies often combine human feedback with AI evaluation systems to solve these issues.

Future of AI Improvement with Human Feedback

The future of how ai models are improved using human ratings is evolving.

New trends include:

• Hybrid feedback systems combining AI and humans

• Real time feedback from users

• Personalised AI learning from individual preferences

• Better tools for reviewer training

These advancements will make AI smarter and more aligned with user needs.

Conclusion

AI models improve significantly when human ratings are used as a feedback loop. These ratings help models understand what is useful, accurate, and aligned with human expectations. Instead of relying only on raw data, models learn from real human preferences, which makes their responses more relevant, safe, and context-aware. Over time, this process reduces errors, improves decision making, and ensures outputs feel more natural and trustworthy.

In the long run, human feedback becomes the bridge between machine intelligence and real-world usability. As AI continues to evolve, combining advanced algorithms with consistent human evaluation will remain essential. This collaboration not only enhances performance but also builds systems that better serve people across industries, from search engines to customer support and beyond.

Key Takeaways

• Human ratings guide AI toward better answers

• Continuous feedback improves performance over time

• Quality and consistency of ratings matter most

• Combining human and AI feedback is the future

• Businesses using this method gain more reliable AI systems

FAQs

1.What is the main goal of human ratings in AI

The goal is to help AI understand what humans consider useful, accurate, and safe.

2.How does human feedback improve AI accuracy

It guides the model to prefer correct answers and avoid mistakes through repeated learning.

3.Is human feedback still needed with advanced AI

Yes, human input is essential to ensure quality, safety, and real world relevance.

4.What is reinforcement learning from human feedback

It is a method where AI learns from human ratings to improve its responses over time.